Healthcare has always been about more than information. It’s about what people do with it, the decisions they make, the care they seek, the outcomes that follow. AI is making it possible to reach people with the right message at the right moment in ways our industry has never seen before.

As we discussed in our previous blog hundreds of millions of people are already turning to AI platforms with their most personal health questions each week. For organizations with the expertise and commitment to show up credibly within that conversation, the opportunity to improve health understanding at scale is enormous.

But in healthcare, opportunity and responsibility are inseparable. Trust takes years to build and moments to lose and that’s as true in AI-mediated environments as anywhere else. The organizations that will lead are not necessarily those that move first, but those that move thoughtfully.

Here’s what that looks like in practice.

1. The Growing Sensitivity of Patient Data

As patients engage more deeply with AI health tools, their questions become increasingly personal. Repeated interactions create detailed health data trails, often without users fully realizing it. For organizations that handle this thoughtfully and transparently, there is a genuine opportunity to deepen patient relationships. For those that don’t, the reputational and regulatory consequences can be severe.

2. Understand how data can be used

Health-related data is commercially valuable, and AI platforms may leverage it in ways that aren’t always visible to users. Healthcare organizations that establish clear, ethical data governance frameworks now will be better positioned as regulation catches up and it will. Getting ahead of this is both the right thing to do and a meaningful strategic differentiator.

3. Privacy, Consent, and Compliance Are Non-Negotiable

Privacy, consent, and regulatory adherence can feel like they slow things down. In reality, they are what makes sustainable AI engagement possible. Organizations that treat these as genuine commitments, rather than final checkpoints, will be the ones patients and HCPs trust as the AI landscape becomes more crowded.

4. Make content quality your strongest asset

AI outputs are only as reliable as the inputs that inform them. Poor or unverified sources produce disinformation and misinformation at scale and that compounds over time in ways that are difficult for patients to detect. The good news is that this dynamic works in reverse too: high-quality, well-structured content from credible sources actively shapes what AI platforms surface. This is the foundation of Generative Engine Optimisation (GEO), and it’s where healthcare communicators have a genuine edge if they invest in it.

5. Plan for hallucinations — don’t be surprised by them

AI systems can produce answers that sound authoritative but are factually wrong. In healthcare, where patients may lack the clinical knowledge to spot an error, this carries real risk. The answer isn’t to avoid AI channels, it’s to ensure credible, verified information is prominent within them, and to monitor actively for inaccuracies in how your therapeutic areas are being represented. Build regular checks into your communications cycle for how AI platforms represent your content and condition areas. Where inaccuracies appear, address the source, not just the symptom.

6. Position AI as a bridge to care, not a destination

Perhaps the most important boundary to reinforce is this: AI should guide patients toward clinical care, not substitute for it. The healthcare organizations that use AI most effectively will be those that design every interaction to strengthen, rather than sidestep, the HCP relationship. It’s important to ensure all AI-enabled patient communications include clear signposting to appropriate clinical care. Reinforce the role of the HCP at every stage of the journey.

7.Earn trust differently for different audiences

Patients and healthcare professionals bring different expectations to AI interactions. Patients prioritize clarity, empathy, and reassurance; HCPs look for precision, evidence, and credibility. A single content approach rarely serves both well. The organizations that excel will develop distinct strategies for each audience, recognizing that trust is earned differently depending on who you are speaking to.

8. Monitor continuously, the landscape will not stand still

AI environments evolve constantly. Algorithms shift, new platforms emerge, and the way content is surfaced changes in ways that aren’t always predictable. Organizations that treat AI strategy as a one-time exercise will find themselves quickly behind. Continuous monitoring and rapid iteration are not optional; they are the baseline for operating responsibly in this space.

AI is already reshaping how patients access information, form beliefs, and make decisions about their health. The organizations that lead in this environment will not be those that move fastest; they will be those that move most responsibly, combining innovation with the scientific rigour and ethical grounding that healthcare communications has always demanded.

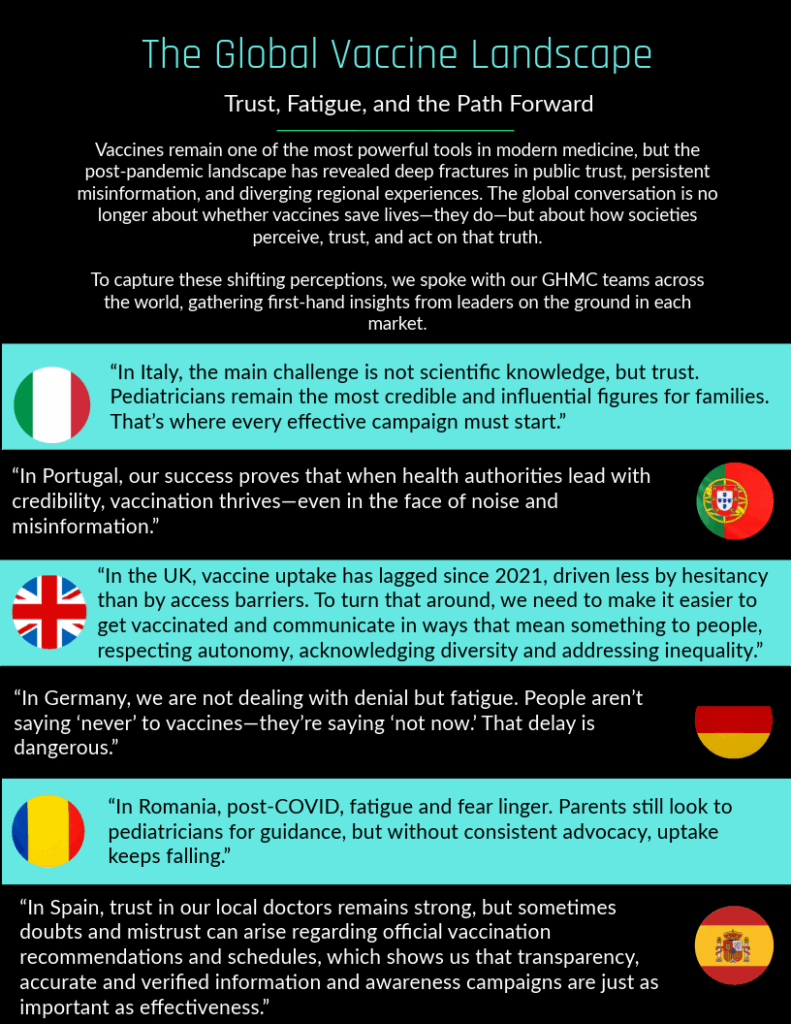

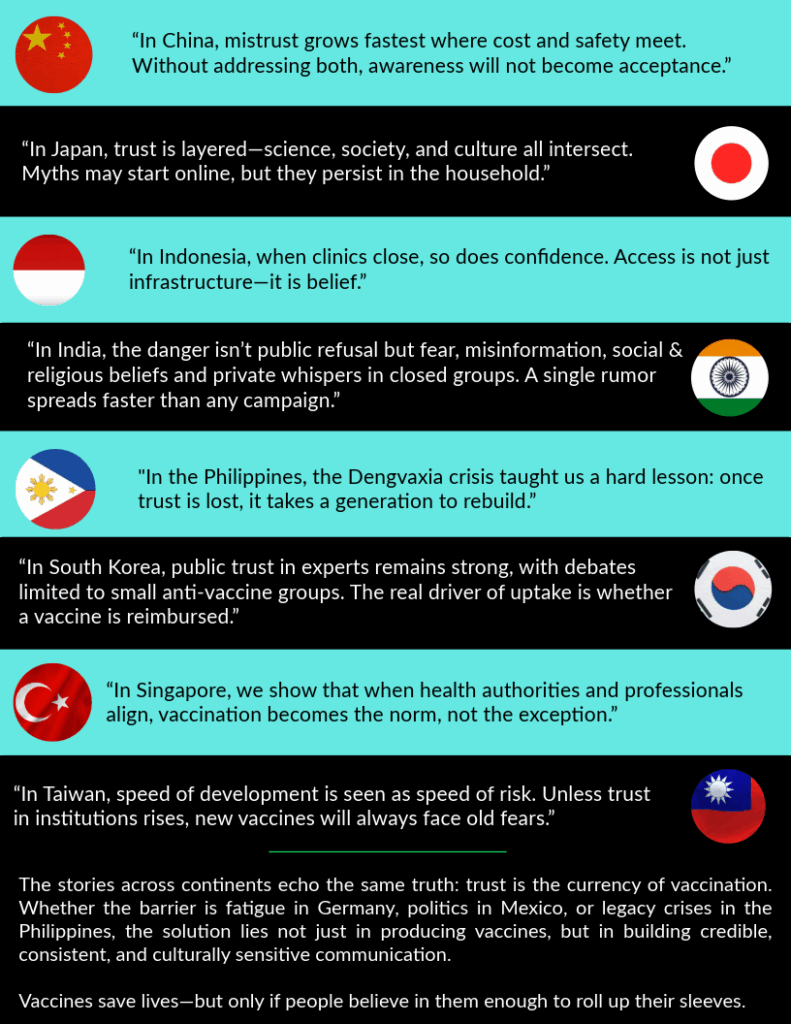

At GHMC, that is precisely the standard we have built our network around, bringing together independent healthcare communications specialists across more than 60 countries, united by a commitment to accuracy, local insight, and genuine impact. Our member agencies are already helping clients navigate the AI landscape with confidence: from content strategy and GEO to compliance frameworks and patient engagement.

If you’re ready to turn the complexity of AI-driven healthcare communications into a clear, responsible, and effective strategy, we’d love to talk. Get in touch here.